There is something oddly fascinating about radio waves, radio communications, and the sheer amount of innovations they’ve enabled since the end of the 19th century.

What I find even more fascinating is that it is now very easy for anyone to get hands-on experience with radio technologies such as LPWAN (Low-Power Wide Area Network, a technology that allows connecting pieces of equipment over a low-power, long-range, secure radio network) in the context of building connected products.

Nowadays, not only is there a wide variety of hardware developer kits, gateways, and radio modules to help you with the hardware/radio aspect of LPWAN radio communications, but there is also open-source software that allows you to build and operate your very own network. Read on as I will be giving you some insights into what it takes to set up a full-blown LoRaWAN network server in the cloud!

A quick refresher on LoRaWAN

LoRaWAN is a low-power wide-area network (LPWAN) technology that uses the LoRa radio protocol to allow long-range transmissions between IoT devices and the Internet. LoRa itself uses a form of chirp spread spectrum modulation which, combined with error correction techniques, allows for very high link budgets—in other terms: the ability to cover very long ranges!

Data sent by LoRaWAN end devices gets picked up by gateways nearby and is then routed to a so-called network server. The network server de-duplicates packets (several gateways may have “seen” and forwarded the same radio packet), performs security checks, and eventually routes the information to its actual destination, i.e. the application the devices are sending data to.

LoRaWAN end nodes are usually pretty “dumb”, battery-powered, devices (ex. soil moisture sensor, parking occupancy, …), that have very limited knowledge of their radio environment. For example, a node may be in close proximity to a gateway, and yet transmit radio packets with much more transmission power than necessary, wasting precious battery energy in the process. Therefore, one of the duties of a LoRaWAN network server is to consolidate various metrics collected from the field gateways to optimize the network. If a gateway is telling the network server it is getting a really strong signal from a sensor, it might make sense to send a downlink packet to that device so that it can try using slightly less power for future transmissions.

As LoRa uses an unlicensed spectrum and granted one follows their local radio regulations, anyone can freely connect LoRa devices, or even operate their own network.

My private LoRaWAN server, why?

The LoRaWAN specification puts a really strong focus on security, and by no means do I want to make you think that rolling out your own networking infrastructure is mandatory to make your LoRaWAN solution secure. In fact, LoRaWAN has a pretty elegant way of securing communications, while keeping the protocol lightweight. There is a lot of literature on the topic that I encourage you to read but, in a nutshell, the protocol makes it almost impossible for malicious actors to impersonate your devices (messages are signed and protected against replay attacks) or access your data (your application data is seen by the network server as an opaque, ciphered, payload).

So why should you bother about rolling your ow LoRaWAN network server anyway?

Coverage where you need it

In most cases, relying on a public network operator means being dependant on their coverage. While some operators might allow a hybrid model where you can attach your own gateways to their network, and hence extend the coverage right where you need it, oftentimes you don’t get to decide how well a particular geographical area will be covered by a given operator.

When rolling out your own network server, you end up managing your own fleet of gateways, bringing you more flexibility in terms of coverage, network redundancy, etc.

Data ownership

While operating your own server will not necessarily add a lot in terms of pure security (after all, your LoRaWAN packets are hanging in the open air a good chunk of their lifetime anyway!), being your own operator definitely brings you more flexibility to know and control what happens to your data once it’s reached the Internet.

What about the downsides?

It goes without saying that operating your network is no small feat, and you should obviously do your due diligence with regards to the potential challenges, risks, and costs associated with keeping your network up and running.

Anyway, it is now high time I tell you how you’d go about rolling out your own LoRaWAN network, right?

The Things Stack on Azure

The Things Stack is an open-source LoRaWAN network server that supports all versions of the LoRaWAN specification and operation modes. It is actively being maintained by The Things Industries and is the underlying core of their commercial offerings.

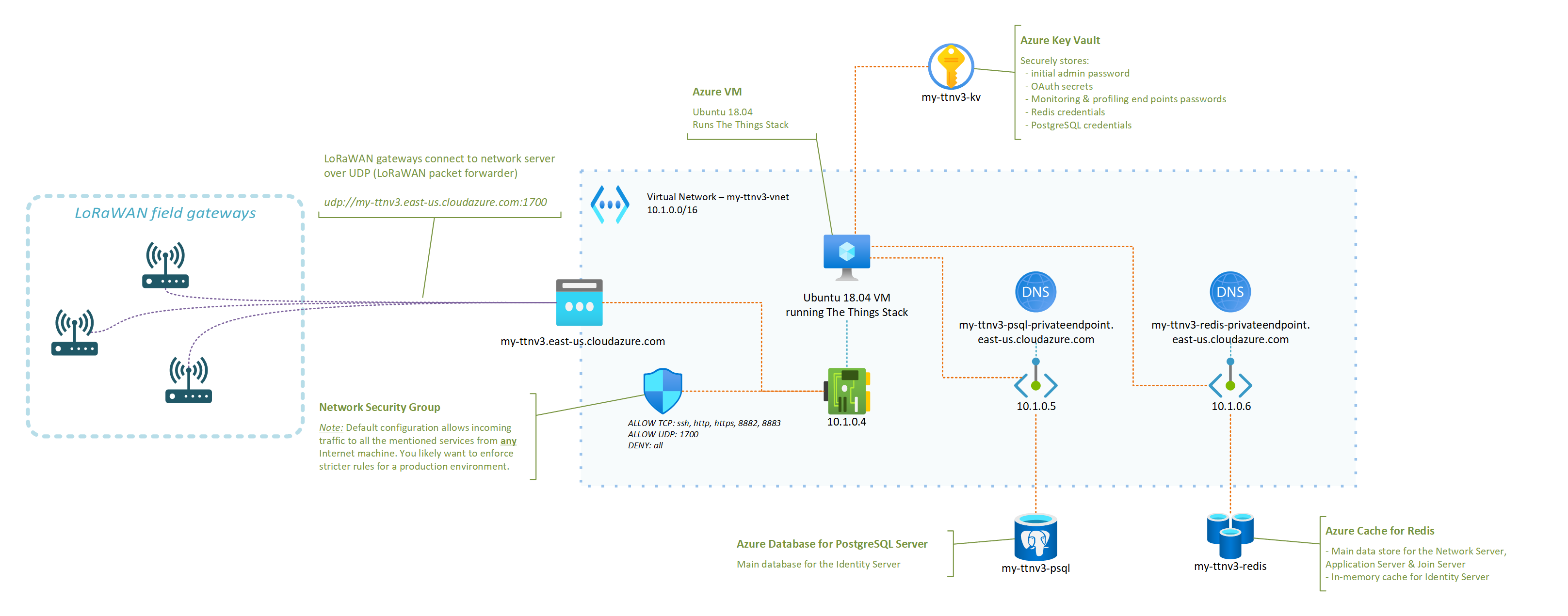

A typical/minimal deployment of The Things Stack network server relies on roughly three pillars:

- A Redis in-memory data store for supporting the operation of the network ;

- An SQL database (PostgreSQL or CockroachDB are supported) for storing information regarding the gateways, devices, and users of thje network ;

- The actual stack, running the different services that power the web console, the network server itself, etc.

The deployment model recommended for someone interested in quickly testing out The Things Stack is to use their Docker Compose configuration. It fires up all the services mentioned above as Docker containers on the same machine. Pretty cool for testing, but not so much for a production environment: who is going to keep those Redis and PostgreSQL services available 24/7, properly backed up, etc.?

I have put together a set of instructions and a deployment template that aim at showing how a LoRaWAN server based on The Things Stack and running in Azure could look like.

The instructions in the GitHub repository linked below should be all you need to get your very own server up and running!

In fact, you only have a handful of parameters to tweak (what fancy nickname to give your server, credentials for the admin user, …) and the deployment template will do the rest!

OK, I deployed my network server in Azure, now what?

Just to enumerate a few, here are some of the things that having your own network server, running in your own Azure subscription, will enable. Some will sound oddly specific if you don’t have a lot of experience with LoRaWAN yet, but they are important nevertheless. You can:

- benefit from managed Redis and PostgreSQL services, and not have to worry about potential security fixes that would need to be rolled out, or about performing regular backups, etc. ;

- control what LoRaWAN gateways can connect to your network server, as you can tweak your Network Security Group to only allow specific IPs to connect to the UDP packet forwarder endpoint of your network server ;

- completely isolate the internals of your network server from the public Internet (including the Application Server if you which so), putting you in a better position to control and secure your business data ;

- scale your infrastructure up or down as the size and complexity of the fleet that you are managing evolves ;

- … and there is probably so much more. I’m actually curious to hear in the comments below about other benefits (or downsides, for that matter) you’d see.

I started to put together an FAQ in the GitHub repository so, hopefully, your most obvious questions are already answered there. However, there is one that I thought was worth calling out in this post, which is: “How big of a fleet can I connect?“.

It turns out that even a reasonably small VM like the one used in the deployment template—2 vCPUs, 4GB of RAM—can already handle thousands of nodes, and hundreds of gateways. You may find this LoRaWAN traffic simulation tool that I wrote helpful in case you’d want to conduct your own stress testing experiments.

What’s next?

You should definitely expect more from me when it comes to other LoRaWAN related articles in the future. From leveraging DTDL for simplifying end application development and interoperability with other solutions, to integrating with Azure IoT services, there’s definitely a lot more to cover. Stay tuned, and please let me know in the comments of other related topics you’d like to see covered!

If you enjoyed this article, don’t forget to subscribe to this blog to be notified of upcoming publications! And of course, you can also always find me on Twitter and Mastodon.

7 replies on “Deploying a LoRaWAN network server on Azure”

Hi Benjamin,

How would you go about to upgrade the NS to a newer version. The version that is installed with your scripts is the v3.10.7 and the latest is 3.14.0. Doesn’t seem to be docker images in the installed version in Azure but runs as a deamon so I couldn’t find any upgrade guides on the TTN documentation.

Greetings, Jakob! I am assuming you are interested in upgrading an *existing* NS install, that you’ve initially deployed using the ARM template? Or is it that you’re asking how to upgrade the ARM template itself so that it deploys a more recent version of the Things Stack?

If the latter, then I have just updated the ARM template on my Github repo and it is now deploying v3.14.1.

If the former, it might be more complicated as you probably need to perform some database schema migrations, and things like that, but happy to try to help and/or work with you on documenting things directly in the repo, maybe?

Cheers… and apologies for the delayed response!

Thanks for the respons and no worries, I have experimented on my own with upgrading an existing installation. Great that the ARM template now is for 3.14.0, it might come to that I have to reinstall everything because I haven’t been able to get the upgrade to work.

There is little if any information available on how to upgrade if not using docker according to the official TTN guide. I do think that there are a lot of People that want to setup an run the Stack like you have in your guide, a more Production like setup.

I’ll be happy to collaborate on how to do an upgrade because always doing a fresh Install will not be an option, especially if you have a lot of gateways and sensor provisioned.

You have my email in the comment post if we shall look at how to handle an upgrade.

Hi Benjamin,

Thanks for sharing !

Why did you use a VM instead of a Azure container instance ?

I’m trying to find out if I could not use any VM in this kind of deployment ?

Thanks,

Florent

Hi Florent,

Great question. Doing my best to remember my thought process as it’s been a while, but I think the idea was to figure out the cheapest path to a fully functional (and yet managed, w.r.t Redis & PostgreSQL) stack — see the cost info in the README.

I think using an Azure Container Instance should definitely work, especially since you can probably get inspiration from the existing docker-compose.

Hope this helps!

Yes, it definitely helps, I’ve been going through the doc and seems that Azure Container Instance limit to 5 ports expose, and we would need 6 of these.

I think that the VM is the only way to go around that since the Azure container apps also limit the port exposed to 1.

I think the way you did it is the cheapest and still the best way to do it! thanks for the quick reply!

Congratulations and thank you for this work!

I’m new to Azure services and I was able to get this server up and running without much trouble.

Right now, I’m working on getting something similar to TTN’s “Storage Integration”…but from the documentation I’ve found, the Open Source version doesn’t support that.

Could you help me by directing me to a possible way to recover histories of uplinks received through this LoRaWan server?