Greetings, folks! Yeah, it’s been a few weeks… again! I blame the month of May in France that was filled with bridge days. Luckily, this means even more cool news for you to chew on!

Also, we have two upcoming Zephyr Tech Talks after a few quiet weeks, make sure to RSVP to the events so that you don’t miss the opportunity to ask your questions live (and please help spread the word by relaying to your social network, if you feel like it!).

Introducing support for Renesas RX

The Renesas RX family is a line of 32-bit microcontrollers from Renesas Electronics which is positioned as an alternative to Arm Cortex-M.

I personally have no first-hand experience with this architecture, but it seems to be pretty competitive with regards to pure performance, as well as power efficiency. Renesas RX MCUs are a pretty popular choice in applications such as industrial automation, motor control, and healthcare.

It’s not often that Zephyr adds support for a new processor architecture, so let’s celebrate! Also worth noting that the Zephyr SDK 0.17.1, which was just released, provides RX toolchains which you can use to easily build your first apps for the QEMU board or the Renesas Starter Kit for RX130.

Video updates

Zephyr has been shipping with video support for a long time, but over the last year or so, this area has regained interest from various parties and has been incredibly active.

- A new Video Control Framework (PR #82158) is available, providing a standardized way to manage video device parameters.

- A Video Shell (PR #88566) was added, offering command-line utilities for interacting with video devices.

- Added support for many more Bayer, RGB, and YUV pixel formats (PR #88817).

- Common CCI (Camera Control Interface) utilities (PR #87935).

Simplifying downstream patch management with west patch

The west patch command is not new, but it’s only recently that documentation for it was added, so I thought it was worth mentioning.

This command allows you to organize and apply patches to Zephyr or Zephyr modules in a controlled manner, by means of a patches.yml file that stores metadata about the various patches. It allows, for example, to indicate whether a given patch corresponds to an open pull request.

patches:

- path: zephyr/my-zephyr-change.patch

sha256sum: c676cd376a4d19dc95ac4e44e179c253853d422b758688a583bb55c3c9137035

module: zephyr

author: Obi-Wan Kenobi

email: [email protected]

date: 2025-05-04

upstreamable: false

comments: |

An application-specific change we need for Zephyr.

- path: bootloader/mcuboot/my-tweak-for-mcuboot.patch

sha256sum: e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855

module: mcuboot

author: Darth Sidious

email: [email protected]

date: 2025-05-04

merge-pr: https://github.com/zephyrproject-rtos/zephyr/pull/<pr-number>

issue: https://github.com/zephyrproject-rtos/zephyr/issues/<issue-number>

merge-status: true

merge-commit: 1234567890abcdef1234567890abcdef12345678

merge-date: 2025-05-06

apply-command: git apply

comments: |

A change to mcuboot that has been merged already. We can remove this

patch when we are ready to upgrade to the next Zephyr release.

New boards and SoCs

Some of the other boards and SoCs that caught my attention. As a reminder, there’s a new board being added to Zephyr almost every day, so the list below is not exhaustive!

- Initial support for the Cortex-R52 based Versal Gen 2 SoC RPU (Real-time Processing Unit) (PR #88667).

- ESP32-S3-Matrix (PR #84634).

- Ezurio BL54L10/L15/L15u DVK (PR #87525).

- STM32U5G9J-DK1 (PR #88550) and DK2 (PR #88787).

- Texas Instruments MSPM0 SoC series and MSPM0G3507 Launchpad board (PR #88725).

- Teensy Micromod (PR #88502).

- Several new WCH—remember? I already mentioned these crazy cheap RISC-V MCUs a while back—boards and correspoiding SoCs:

- CH32V203 SoC and WeactStudio CH32V203 Blue Pill plus (PR #87490).

- CH32V00x series and CH32V006EVT (PR#89361).

Drivers

- New sensor drivers:

- Numerous new display controller drivers were introduced, including SH1122 (PR #87431), SSD1320 (PR #87169), SSD1331 (PR#88178), SSD1351 (PR #89566), and ST75256 (PR #87584), all by @VynDragon 🚀

- Auxiliary Display: Driver for common 7-segment displays (PR #68501), and LCD1602 (PR #89654).

- New UART bridge driver (PR #89110) allows to configure bridge between two serial devices, for example a USB CDC-ACM serial port and a hardware UART.

uart-bridge {

compatible = "zephyr,uart-bridge";

peers = <&cdc_acm_uart0 &uart1>;

};

- Support for ADI TMC51xx stepper motor controller (PR #88350)

- USB Device Controller for Atmel SAM0 (PR #86814).

- AIROC Wi-Fi driver supports new devices and modules (PR #86137).

Miscellaneous

- It can sometimes be hard to keep track of all the files (.dts, .dtsi, overlays…) that make up your device’s Devicetree. The

zephyr.dtsfile that’s generated as part of the Zephyr build now includes comments that indicate where each given node or property was last touched. It makes it much easier to find that damn overlay that keeps having the last word 🙂

/ {

#address-cells = < 0x1 >; /* in zephyr/dts/common/skeleton.dtsi:10 */

#size-cells = < 0x1 >; /* in zephyr/dts/common/skeleton.dtsi:11 */

model = "Wio Terminal"; /* in zephyr/boards/seeed/wio_terminal/wio_terminal.dts:15 */

compatible = "seeed,wio-terminal"; /* in zephyr/boards/seeed/wio_terminal/wio_terminal.dts:16 */

/* node '/chosen' defined in zephyr/dts/common/skeleton.dtsi:12 */

chosen {

zephyr,flash-controller = &nvmctrl; /* in zephyr/dts/arm/atmel/samd5x.dtsi:52 */

zephyr,entropy = &trng; /* in zephyr/dts/arm/atmel/samd5x.dtsi:51 */

zephyr,sram = &sram0; /* in zephyr/boards/seeed/wio_terminal/wio_terminal.dts:19 */

zephyr,flash = &flash0; /* in zephyr/boards/seeed/wio_terminal/wio_terminal.dts:20 */

zephyr,code-partition = &code_partition; /* in zephyr/boards/seeed/wio_terminal/wio_terminal.dts:21 */

zephyr,display = &ili9341; /* in zephyr/boards/seeed/wio_terminal/wio_terminal.dts:22 */

zephyr,console = &board_cdc_acm_uart; /* in zephyr/boards/common/usb/cdc_acm_serial.dtsi:9 */

zephyr,shell-uart = &board_cdc_acm_uart; /* in zephyr/boards/common/usb/cdc_acm_serial.dtsi:10 */

zephyr,uart-mcumgr = &board_cdc_acm_uart; /* in zephyr/boards/common/usb/cdc_acm_serial.dtsi:11 */

zephyr,bt-mon-uart = &board_cdc_acm_uart; /* in zephyr/boards/common/usb/cdc_acm_serial.dtsi:12 */

zephyr,bt-c2h-uart = &board_cdc_acm_uart; /* in zephyr/boards/common/usb/cdc_acm_serial.dtsi:13 */

};

...

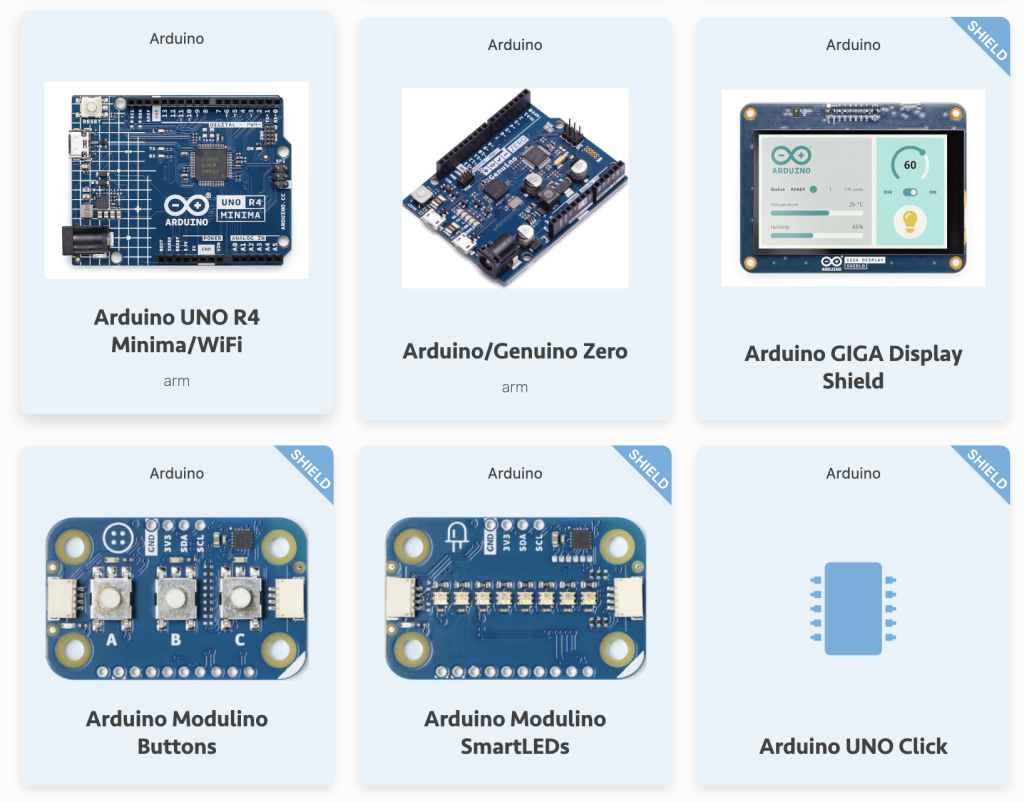

- The board catalog now also includes shields as part of the listing. There are currently 150+ shields supported in Zephyr so it’s nice that it will now be easier to search for them more efficiently.

- CoAP Secure (CoAPS) support has been added, allowing to use DTLS to secure CoAP communication. (PR #65963).

- LLEXT now has basic support for x86 architecture (PR #90176) as well as ARC MPU (PR#89118).

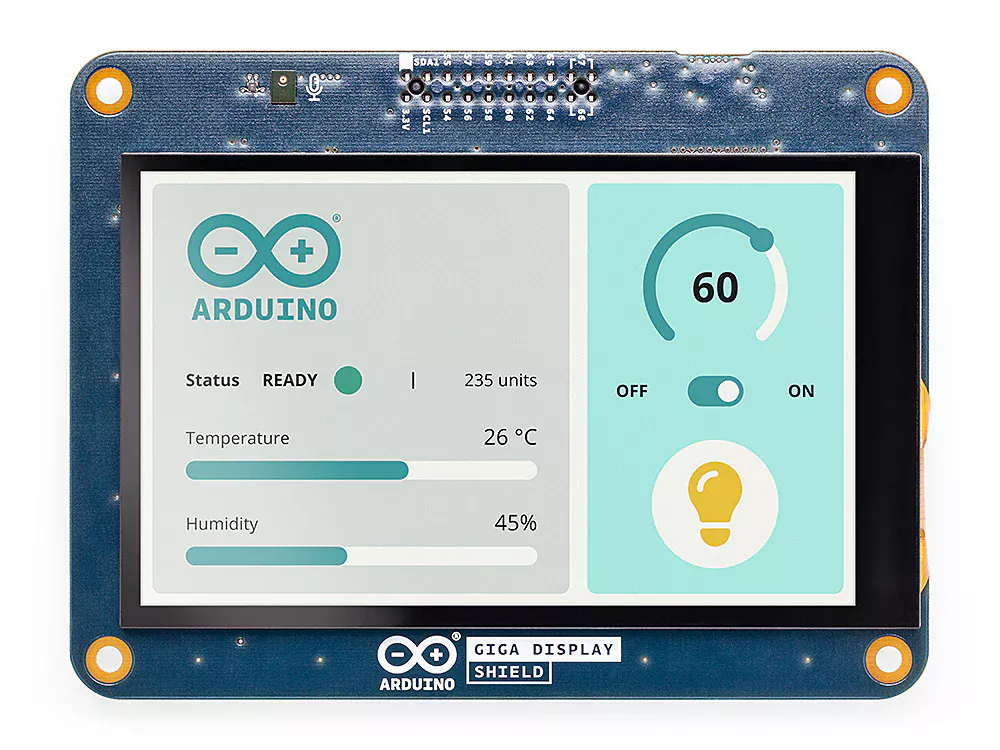

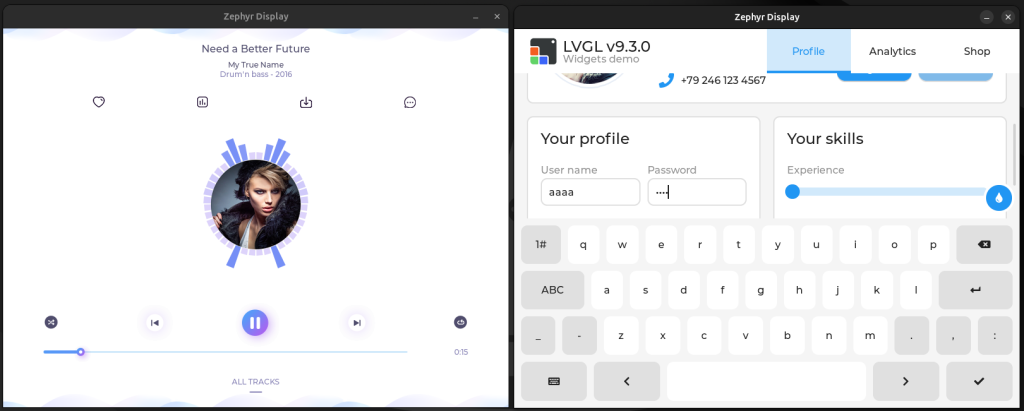

- LVGL now supports multiple displays. (PR #86815).

- Support for Altera NIOS-II architecture has been dropped. (PR #89762)

- Added

XSI_SINGLE_PROCESSandPOSIX_CLOCK_SELECTIONPOSIX option groups (PR #89068). - Optimizations to the ZMS backend for Settings (PR #87792).

- Added runtime Zbus observers pool (PR #88834) for dynamic observer registration.

westnow offers basic PowerShell auto-completion. (PR #88358)

A big thank you to the 73 (😬) individuals who had their first pull request accepted since my last post, 💙 🙌: @herculanodavi, @ZhaoQiang-b45475, @abhinavnxp, @batkay, @fiveohhh, @gudipudiramanakumar, @mefromac, @segerlund, @alexapostolu, @dsseng, @sayooj-atmosic, @edt-alex, @alrodlim, @Ole2mail, @cfunes-pragma, @cdrask, @wjklimek1, @squiniou, @Kronosblaster, @morishitaandre, @dependabot[bot], @PaulSchaetzle, @zbas, @sandro97git, @tobiwan88, @jsbatch, @gcopoix, @TomasBarakNXP, @mikolaj-klikowicz, @AnElderlyFox, @cbrake, @shiftee, @yolaval, @FKainka, @benson0715, @ct-lt, @Brandon-Hurst, @hdou, @jeremydick, @bastienjauny, @iwasz, @duerrfk, @ebmmy, @araneusdiadema, @srishtik2310, @jbr7rr, @mihnen, @muahmed-silabs, @leonmariotto, @conweek, @fmoessbauer, @AmaanSingh, @Harry-Martin, @ymleung314, @smfebe, @dantti, @meijemac, @thsieh97, @Vijayakannan, @vytvir, @kylebonnici, @tsi-chung, @yongxu-wang15, @anujdeshpande, @Lorl0rd, @minumn, @120MF, @cmm1981, @nordic-mik7, @dylanHsieh4963, @harristomy, @hfruchet-st, and @vignesh-aerlync.

As always, I very much welcome your thoughts and feedback in the comments below!

If you enjoyed this article, don’t forget to subscribe to this blog to be notified of upcoming publications! And of course, you can also always find me on Twitter and Mastodon.

Catch up on all previous issues of the Zephyr Weekly Update: