It’s already feature freeze day for Zephyr 4.0, and it’s been two months since I last posted a Zephyr Weekly Update. Oops! Personal life got in the way, a baby girl joined our family since then. So, there’s my excuse! 🙂

Since it wouldn’t do justice to any of the features introduced over the past few months by cramming them all into one post, I decided to focus on some recent improvements to the Zephyr documentation that you ought to know about.

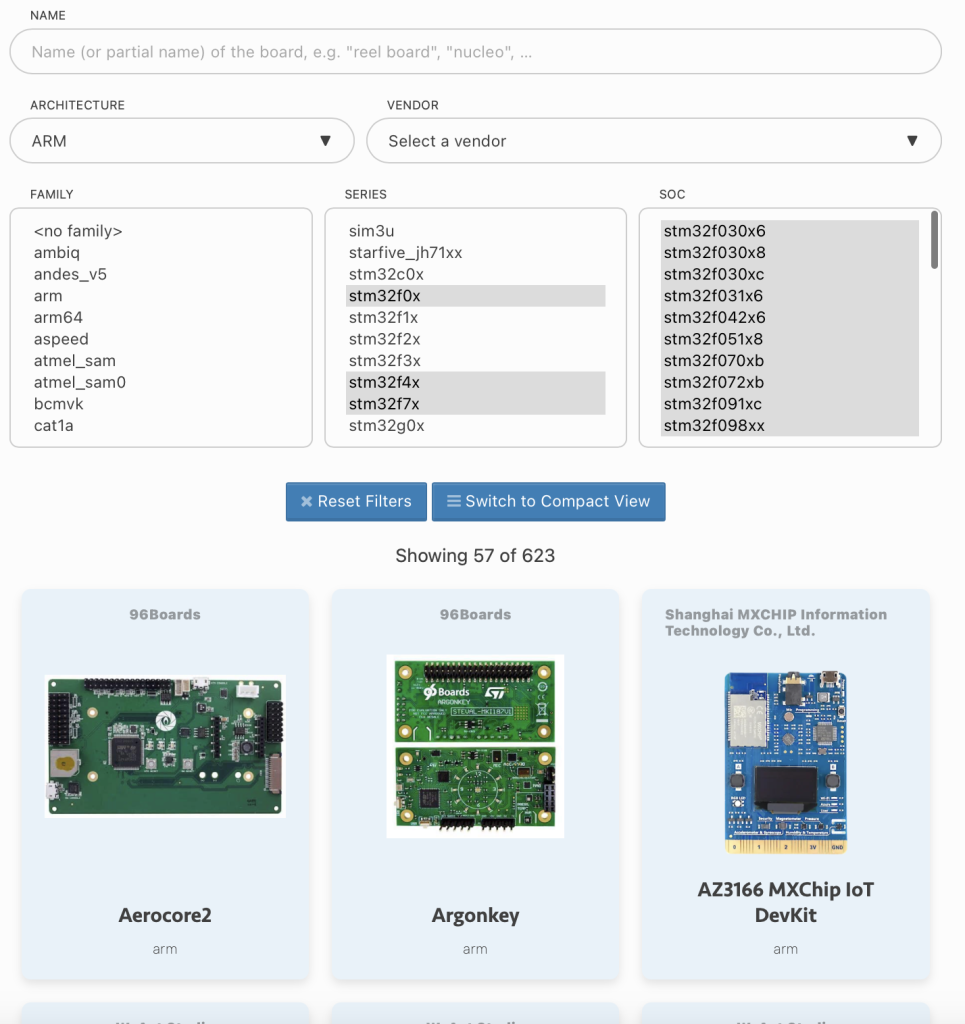

New board catalog

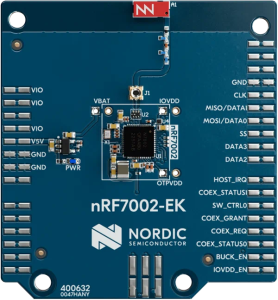

There are well over 600 boards supported in Zephyr, and until very recently we didn’t really provide an easy way for someone to easily get a sense of which boards were available for a given architecture or SoC family, or from a given vendor. Instead, one would be served with a ginormous “flat” list of hundreds of boards that was really hard to navigate.

With the new board catalog, you can now easily filter boards according to various criteria, and narrow down the list of 620+ boards to only the boards that you care about in just seconds.

And more is coming! This catalog will soon also allow you to do something that’s even more useful when prototyping a new project which is filtering by supported hardware capabilities (think: “I am looking for a board with a display, a Bluetooth chip, and from vendor Foo, what do you have in stock for me, Zephyr?”).

Towards Zephyrpedia? 🙂

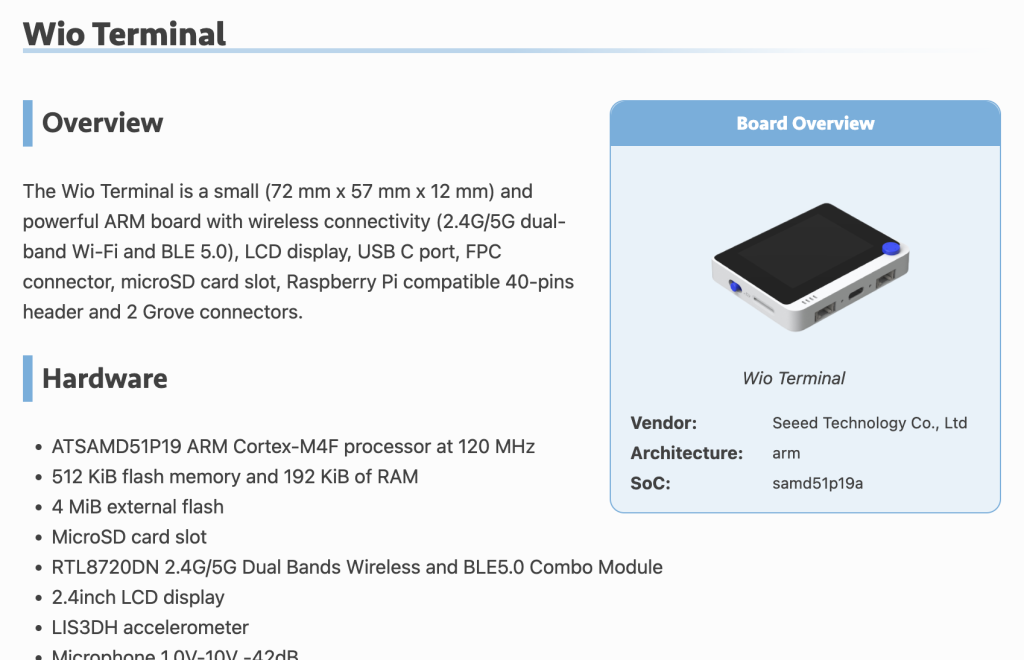

You may have noticed that the documentation page for -most- boards recently changed.

That new card on the side of the page, similar to what you would find on Wikipedia, is a first step towards trying to provide more structure and uniformity to the documentation of the various boards supported in Zephyr and, more importantly, to make sure that every piece of information that can automatically be deduced from things like Devicetree, or the board hardware model, is used to generate the documentation.

The intent is to make it way less likely that things like the Supported Features section of a board’s doc page get outdated, since they will be generated from a single source of truth.

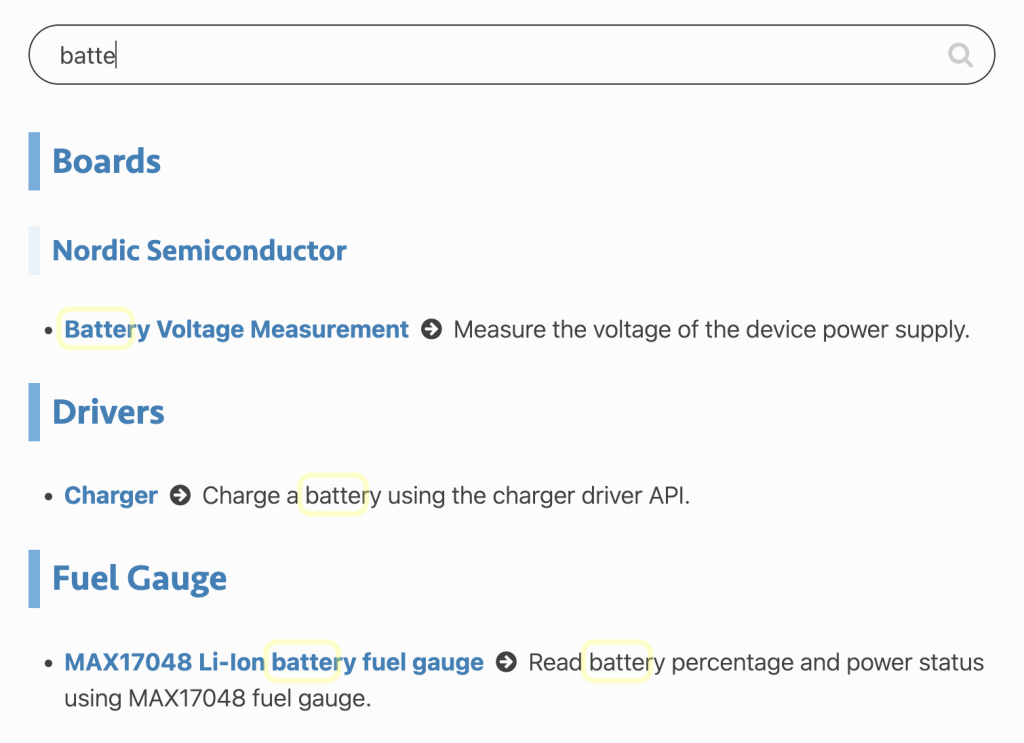

Finding the right code sample

We have nearly 500 different code samples so, as you might have experienced, it can sometimes be hard to find the right sample for your needs.

Somewhat similar to the board catalog, you can now quickly search for samples right from the samples index page. As a lot of effort was spent carefully reviewing the description of the samples, and making sure they contain the right keywords in them, you should be able to find what you’re looking for much more easily.

Try it for yourself, whether you care about mqtt, audio, servo, battery, or whatever else, you will hopefully find at least one sample that will help you get going!

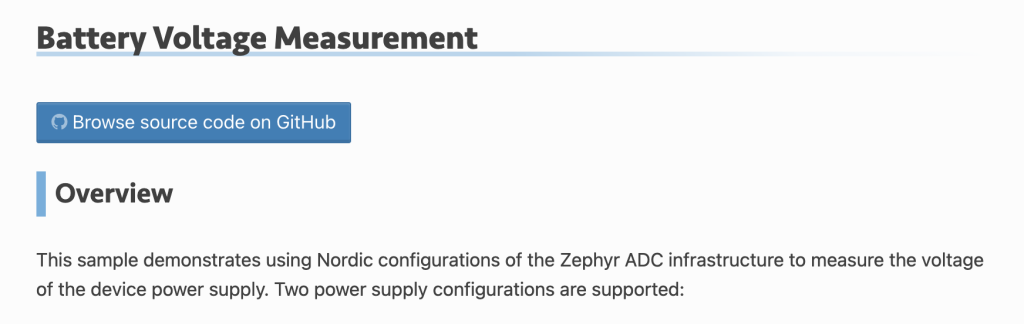

What’s also new is that the README for each code sample now includes a button that can directly take you to the source code of said sample on Github!

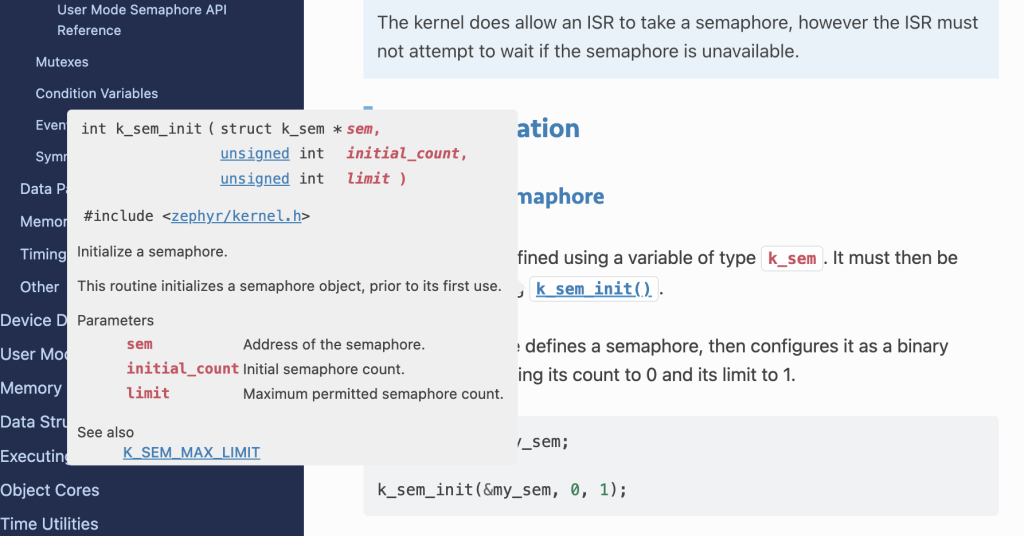

C API tooltips

You may have noticed that the documentation of Zephyr’s C API is not “inlined” in the main documentation page anymore, and you may now refer directly to the Doxygen documentation to access the reference documentation of the various APIs.

However, in many cases you probably won’t have to leave the main Zephyr documentation since everytime an API is mentioned in the documentation, you can hover over it to get a tooltip which will directly show you the full Doxygen documentation of that API.

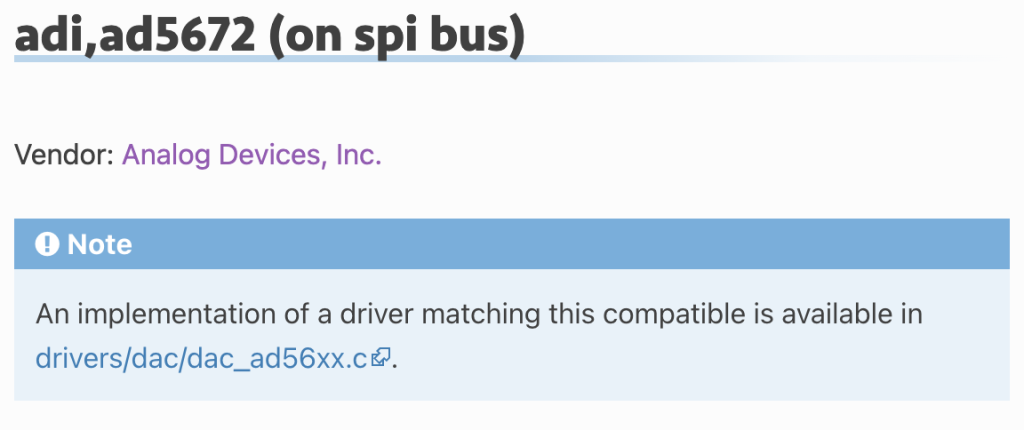

Where’s the driver for that compatible?

Often times, when adding or editing a node in your Devicetree, looking at the documentation of the binding properties is not enough to understand how the actual driver matching the node’s compatible makes use of the properties, and what behavior to expect.

From the Devicetree bindings documentation page, you may now click on the compatible you’re interested in and, alongside the documentation of the various binding properties, you can now actually go directly to the matching driver’s source code to see how it’s implemented. Handy, eh?

I am sure there are a lot more tips I could share, but I am also quite certain there are things you would like to see implemented in the documentation so please feel free to join the #documentation channel on Discord to get the conversation going!

As always, I very much welcome your thoughts and feedback in the comments below!

If you enjoyed this article, don’t forget to subscribe to this blog to be notified of upcoming publications! And of course, you can also always find me on Twitter and Mastodon.

Catch up on all previous issues of the Zephyr Weekly Update: